If Gutenberg saw how we publish multi-modal content today, he wouldn’t be impressed; he’d be exhausted. We’ve traded the printing press for a fragmented nightmare of platforms. We write text in one system, record audio in another, generate images in a third, and then spend hours manually stitching them together in a “Headless CMS” that is essentially just a very expensive database.

We are treating multimedia publishing like an artisanal craft when it should be an automated assembly line.

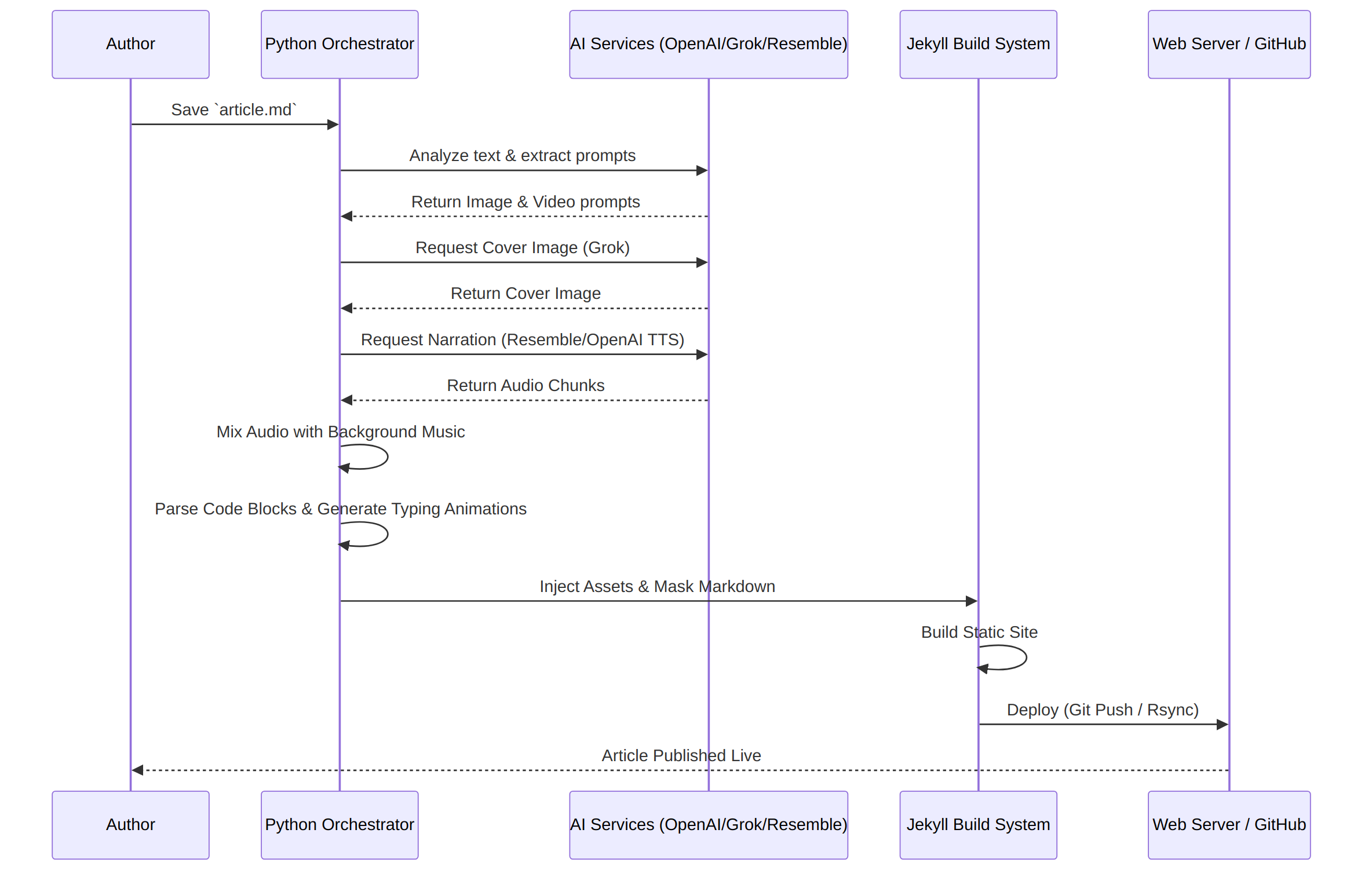

To solve this, I designed and built a proprietary AI-Orchestrated Headless CMS pipeline for Metamatic.net. This system completely decouples content creation from presentation. I simply write raw markdown. A fleet of Python-based AI agents takes over—automatically orchestrating, generating, and mixing the multimedia content—before deploying the final, multi-modal payload to a Jekyll-based frontend.

Here is a technical breakdown of how this pipeline operates.

The Architecture Overview

The core philosophy of the system is treating the markdown text as the absolute source of truth. Everything else—cover images, background music, AI voice narration, and dynamic code animations—is derived from this text via an automated pipeline.

- Content Repository: Local Markdown files supplemented by state management in MongoDB.

- Orchestrator: A custom Python backend (running via MCP) that coordinates API calls to OpenAI, Grok, and Runway for media generation.

- Presentation Layer: Jekyll static site generator, hydrated with generated assets and enhanced with dynamic JavaScript (

mprod.js) on the client side.

The Publishing Sequence

When an article is drafted, it moves through several automated gates before reaching production.

Automating Multi-Modal Assets

The true power of this headless approach is not just distributing text, but autonomously building the surrounding media.

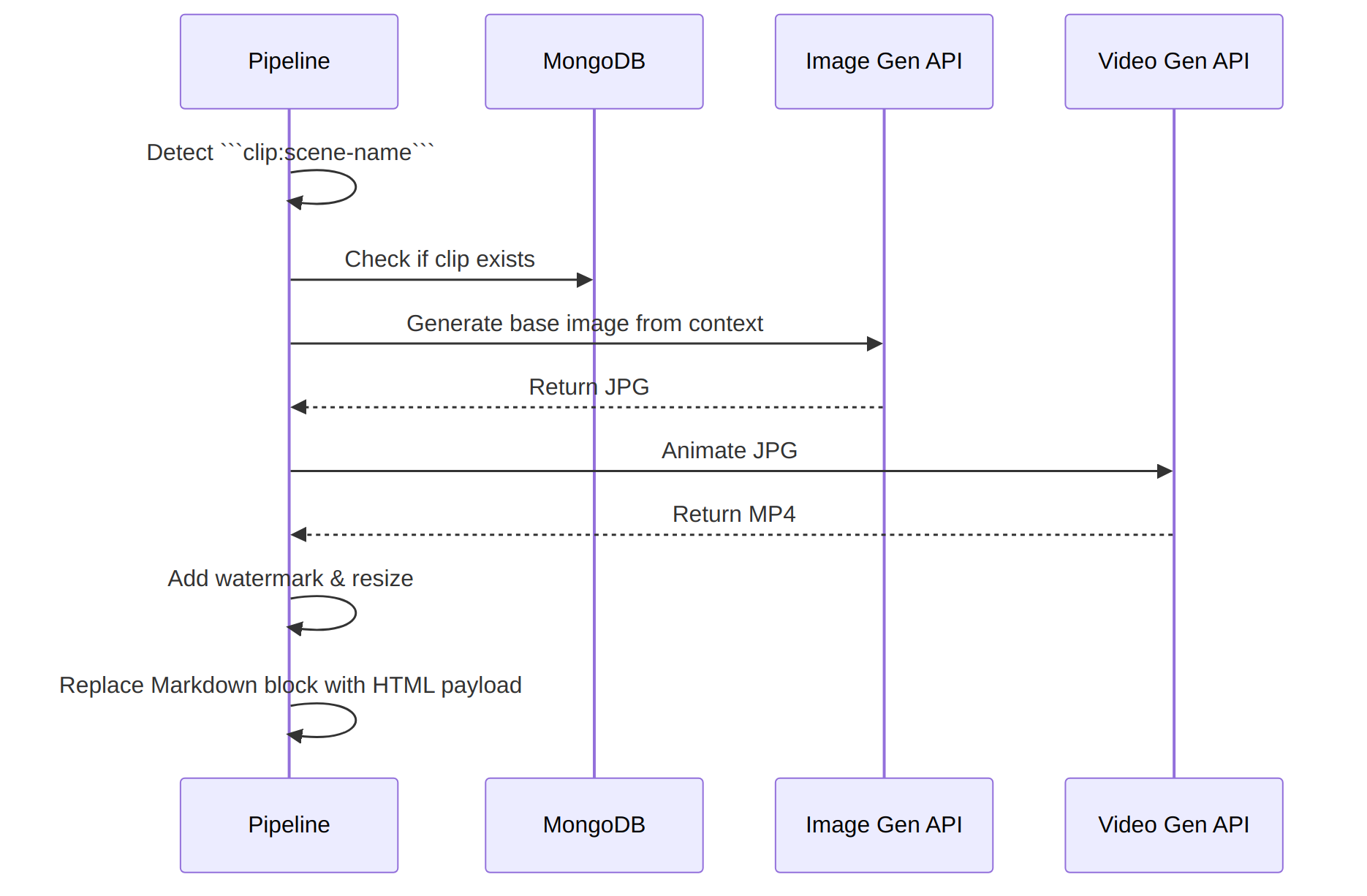

1. The Clip Video System

Instead of manually creating and embedding videos, I built a syntax extension into my markdown. By writing ` ```clip:scene-name `, the pipeline intercepts the block and triggers a multi-step generation process:

- Image Generation: Generates a base frame using AI.

- Video Generation: Sends the frame to a video generation model to animate the scene.

- Deployment: The video is downloaded, watermarked, resized, and moved to the Jekyll asset folder. The markdown block is replaced with a masked

divthat the frontend JavaScript mounts as a video player.

2. Narration and Audio Mixing

Accessibility and convenience are critical. The pipeline reads the final article text, generates a pronunciation map, and queries a TTS engine.

It doesn’t stop at raw voice. The system utilizes another agent to generate contextual background music, mixes the voiceover with the track using ffmpeg, and outputs a polished podcast-like .mp3 file that is embedded at the top of the article.

3. Code Typing Animations (Codecasts)

Technical blogs need code snippets, but static blocks can be dry. The pipeline parses the markdown for code blocks and automatically generates “codecast” videos—animations that simulate a developer typing the code in real-time, complete with configurable typo rates to make it look human.

Why Build This?

While commercial Headless CMS platforms like Contentful or Sanity are excellent for enterprise data distribution, they still require a human to source, edit, and upload media.

By building a custom orchestration layer, I achieved Operational Mastery. The system operates as a tireless editorial team. I focus purely on the text and the technical architecture, while the Python agents handle the heavy lifting of multimedia production and deployment.

This decoupled architecture proves that the future of frontend development isn’t just about fetching JSON from an API—it’s about automating the very creation of that payload before it ever hits the wire.

Best Regards,

Heikki Kupiainen / Metamatic Systems